Clinical AI has captured substantial executive attention and significant capital investment. Across the country, health systems are piloting ambient documentation tools, predictive risk models, diagnostic imaging algorithms, and AI-assisted clinical decision support. The promise is real—AI does demonstrably improve performance across several of these categories.

The results, however, are uneven. Early-adopter health systems that deployed clinical AI aggressively are reporting mixed outcomes: high adoption in some domains, significant friction in others, and in several cases, AI-generated recommendations that clinicians don’t trust and therefore don’t follow.

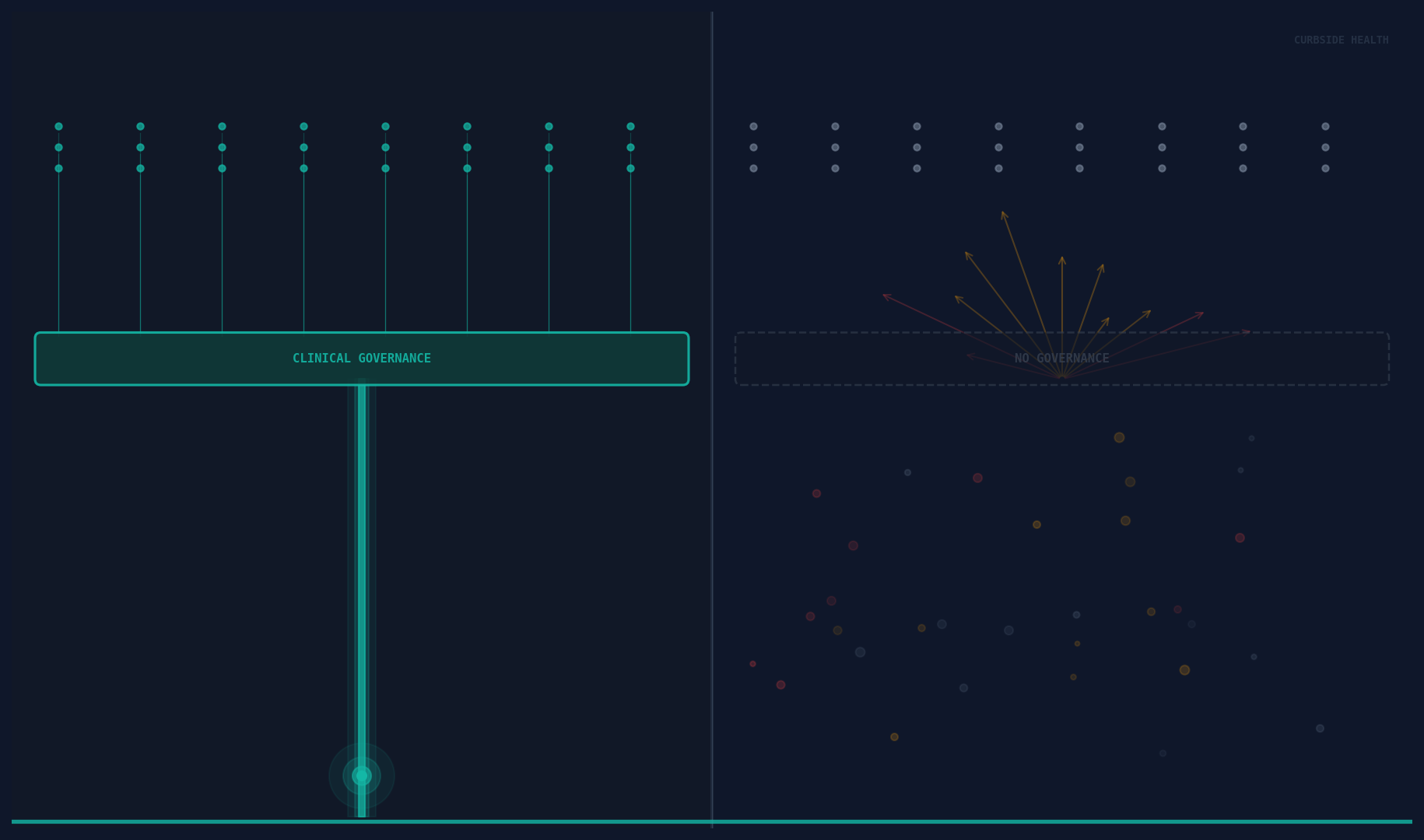

The failure mode is almost never the algorithm. It’s the governance layer underneath it.

AI Without Governance Is a Speed Problem

The core promise of AI in clinical decision support is speed: faster synthesis of evidence, faster surfacing of relevant protocols, faster identification of patients at risk. These are genuine advantages.

But speed only creates value if it’s moving in the right direction. AI that rapidly surfaces a clinical recommendation that doesn’t reflect the institution’s current guidelines, local antibiogram, or specific clinical standards is not useful—it’s noise that clinicians learn to ignore.

Generic clinical AI—the kind built on aggregate population data, broad literature synthesis, or national guidelines—produces recommendations that are correct on average. Average is not useful in a clinical encounter. Useful is: the right antibiotic for this patient, at this hospital, based on this institution’s own resistance patterns and approved empiric therapy guidelines.

When AI is deployed on top of absent or outdated clinical governance infrastructure, it cannot produce locally valid recommendations. The algorithm has no locally valid inputs to work with.

The Common Mistake

The most common clinical AI implementation mistake is treating AI as a replacement for governance rather than an accelerant of it.

This manifests in a predictable pattern. A health system identifies AI-assisted clinical decision support as a strategic priority. They evaluate vendors, select a platform, complete an EHR integration, and launch. Clinicians begin receiving AI-generated recommendations. Adoption is initially promising—the novelty drives engagement.

Then, gradually, trust erodes. Recommendations don’t match the pharmacy formulary. A suggested antibiotic is one the institution’s stewardship committee has moved away from. A pathway suggestion reflects a guideline version that was superseded twelve months ago. Clinicians start clicking past the recommendations the same way they click past EHR alerts.

The vendor gets blamed. The problem is the governance layer.

What the Good Ones Do Differently

The health systems achieving durable value from clinical AI share a common foundation: they’ve built—or are actively building—robust clinical content governance before, or in parallel with, AI deployment.

This means a maintained library of clinical pathways, guidelines, and protocols that reflects the institution’s current standards. Content that’s locally adapted, regularly reviewed, and version-controlled. An editorial process—however lightweight—that ensures clinical leadership can stand behind what the AI is presenting.

This governance infrastructure serves two functions in relation to AI. First, it provides the validation data that makes locally-scoped AI recommendations valid rather than generic. When the AI knows this institution’s antibiogram, approved formulary, and current sepsis bundle parameters, it can recommend something specifically useful rather than something population-average.

Second, it provides the trust architecture that makes AI adoption sustainable. Clinicians follow AI-generated recommendations at scale when they believe those recommendations reflect their institution’s considered clinical standards—not a vendor’s interpretation of aggregate literature. “This is what our medical staff has approved” is a fundamentally different value proposition than “this is what the algorithm suggests.”

The Sequence Matters

Governance infrastructure first. AI acceleration second.

This sequencing is counterintuitive for health systems under pressure to show AI innovation. It’s slower in the short term and harder to demo on a conference stage.

But the alternative—AI deployment on top of absent governance—produces the mixed results now becoming visible in early-adopter data. High initial engagement. Declining trust. Clinician bypass behavior. Eventual questions about whether the AI investment is producing clinical value.

The health systems that will lead on clinical AI over the next decade are the ones currently building the content governance layer that AI needs to run on: a live, maintained, locally validated library of clinical standards, integrated into workflow, tracked for utilization, and governed by clinical leadership.

That infrastructure isn’t just an AI prerequisite. It’s independently valuable—it improves care today, before any AI is deployed. The AI then accelerates both the creation of that content and its delivery at the point of care.

Build the Foundation

Clinical AI is not a shortcut past the hard work of clinical governance. It’s a powerful tool for organizations that have done that work—and a high-cost source of alert fatigue for organizations that haven’t.

The question for any health system evaluating clinical AI is not “which platform has the best algorithm.” It’s “do we have the governance infrastructure that makes an algorithm useful?”

The good ones are building that infrastructure now.