Walk into the quality department of almost any mid-size health system and ask to see the clinical pathway for community-acquired pneumonia. There’s a good chance they have one. They may have had it for years.

Now ask how often it’s used.

That question tends to produce a different kind of answer: uncertainty, a mention of EHR integration challenges, a reference to a committee that’s been working on it. Sometimes silence.

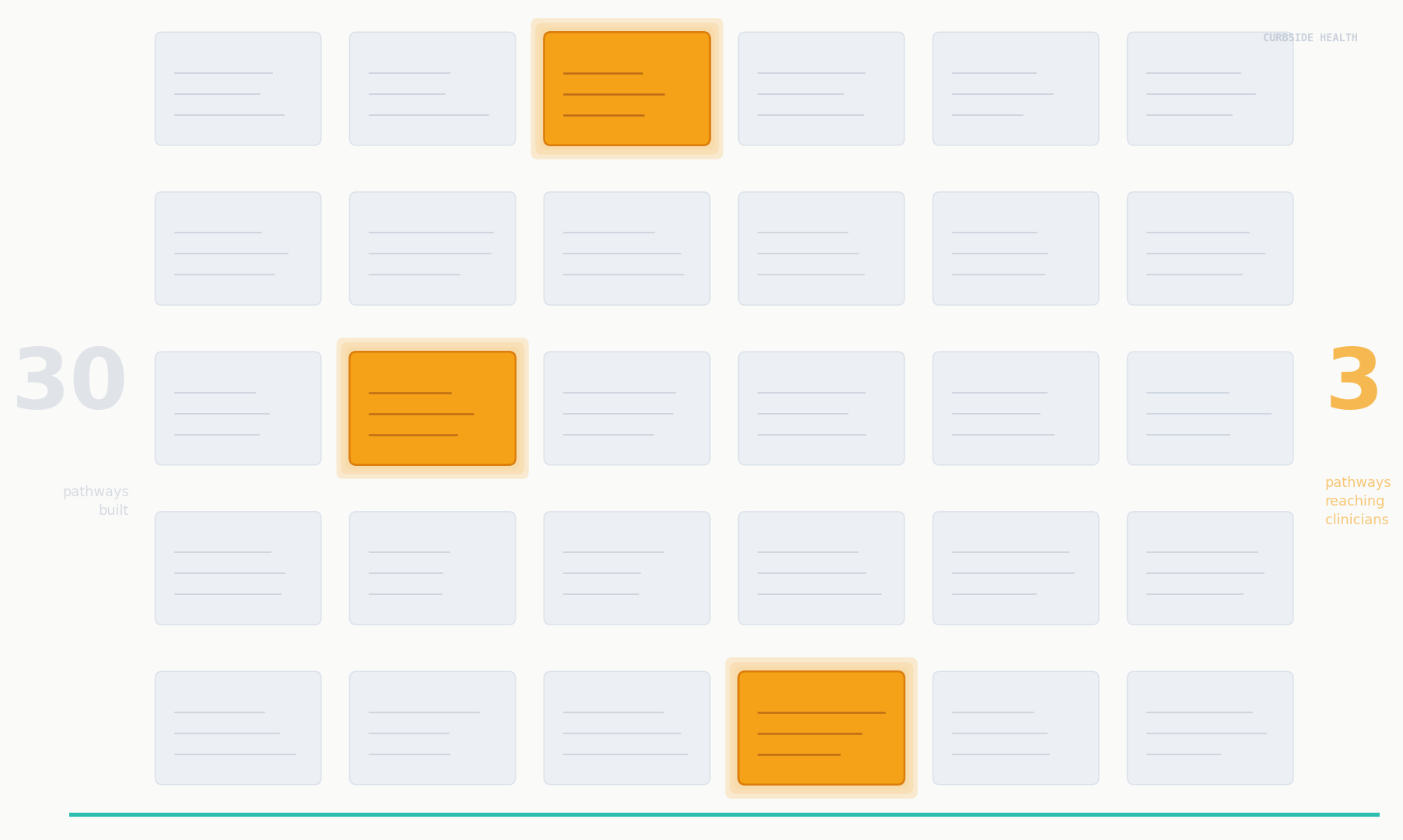

This is the utilization gap—the distance between “we have a pathway” and “our clinicians follow it.” It’s the most common failure mode in clinical quality programs, the least measured, and the most consequential.

The Pathway That Exists Is Not the Pathway That Works

A clinical pathway that isn’t utilized is a document, not a tool. The distinction matters because the outcomes evidence for clinical pathways isn’t evidence for documents—it’s evidence for pathways that are actually used, at the right moment, by the right clinicians.

The value of an evidence-based protocol doesn’t accrue from its existence. It accrues from its utilization. A well-designed sepsis bundle that’s retrieved 40% of the time delivers 40% of the potential outcome improvement. The rest disappears into the variation gap.

For most health systems, the utilization rate of their clinical pathways is not known—not because the question isn’t important, but because the infrastructure to answer it doesn’t exist.

Why Utilization Fails

The pathways that don’t get used share common failure patterns.

Location. The pathway lives in a shared drive, a SharePoint folder, or an intranet page that no one navigates to during a clinical encounter. Busy clinicians follow protocols that are in their workflow, not protocols they have to retrieve.

Timing. Access at the moment of decision is different from access in principle. A pathway that requires three steps to find is effectively inaccessible in the emergency department at 2 a.m.

Currency. Pathways that haven’t been updated in two years lose clinician trust. If the guideline predates the last antibiogram update or the most recent IDSA guidance change, clinicians reasonably question whether it reflects current best practice.

Awareness. When new pathways are released or existing ones are updated, most health systems have no mechanism to ensure that clinicians know. Email announcements disappear. Policy acknowledgment clicks are completed without reading. The protocol exists—but nobody told the night shift.

Closing the Gap Requires More Than a Better Document

The utilization gap cannot be closed with better formatting or a nicer interface. Closing it requires integrating the pathway into the clinical workflow, not storing it adjacent to the workflow.

That means EHR integration—surfacing the relevant pathway at the moment of ordering, not as a reference available on request. It means attestation and awareness infrastructure that ensures clinicians know when protocols exist and when they change. And it means measurement: knowing, by topic, by unit, by provider, how often the pathway is being used—so that quality leadership can distinguish between a protocol that’s being followed and a protocol that’s being bypassed.

The path from “we have a pathway” to “our clinicians follow it” is an infrastructure journey, not a documentation project. Organizations that conflate the two invest in writing protocols without investing in delivering them—and then measure the wrong thing when outcomes disappoint.

The Gap Is Solvable

The gap between evidence and practice is not primarily a knowledge problem. Clinicians generally know what good care looks like. It’s an infrastructure problem—a failure to get the right guidance to the right clinician at the right moment, in a form they trust and a location they’ll actually use.

The utilization gap is solvable. But it requires a different investment than most health systems have made. Not more documents—better delivery.