The shadow AI statistics are striking. The framing around them is mostly wrong.

Most coverage treats shadow AI as a compliance problem — unauthorized tools, data privacy exposure, HIPAA liability. Those risks are real and worth managing. But if that's the whole story you take from the numbers, you're missing the more consequential signal.

When 20% of clinicians are using AI tools their institutions haven't approved — and more than 40% of healthcare workers know colleagues who are — you're not looking at a discipline problem. You're looking at the early adopter segment of a diffusion curve that's moving faster than institutional governance can track.

Understanding where we are on that curve, and what it predicts about where we're heading, is the more useful leadership question.

What Shadow AI Is Actually Telling You

The data is worth sitting with. Of 500+ healthcare workers surveyed in late 2025, 45% of those using unauthorized AI cited faster workflow as their primary reason. A quarter cited better functionality than what their institution had officially approved. One in ten were applying unapproved AI to direct patient care — not administrative convenience, but clinical encounters.

These aren't bad actors. They're clinicians and administrators trying to do their jobs in an environment where the approved tools aren't keeping pace with what's available. The institution has a procurement cycle and a compliance framework. The clinician has a patient in front of them and ChatGPT on their phone.

The compliance framing obscures the real finding: demand for AI in clinical and administrative workflows has outrun institutional supply. That gap is what shadow AI represents. And the governance data makes it worse: administrators are three times more likely than clinicians to be actively involved in AI policy development (30% versus 9%). The people setting AI policy are largely not the people using AI — which explains both the gap and the frustration.

The Adoption Arc

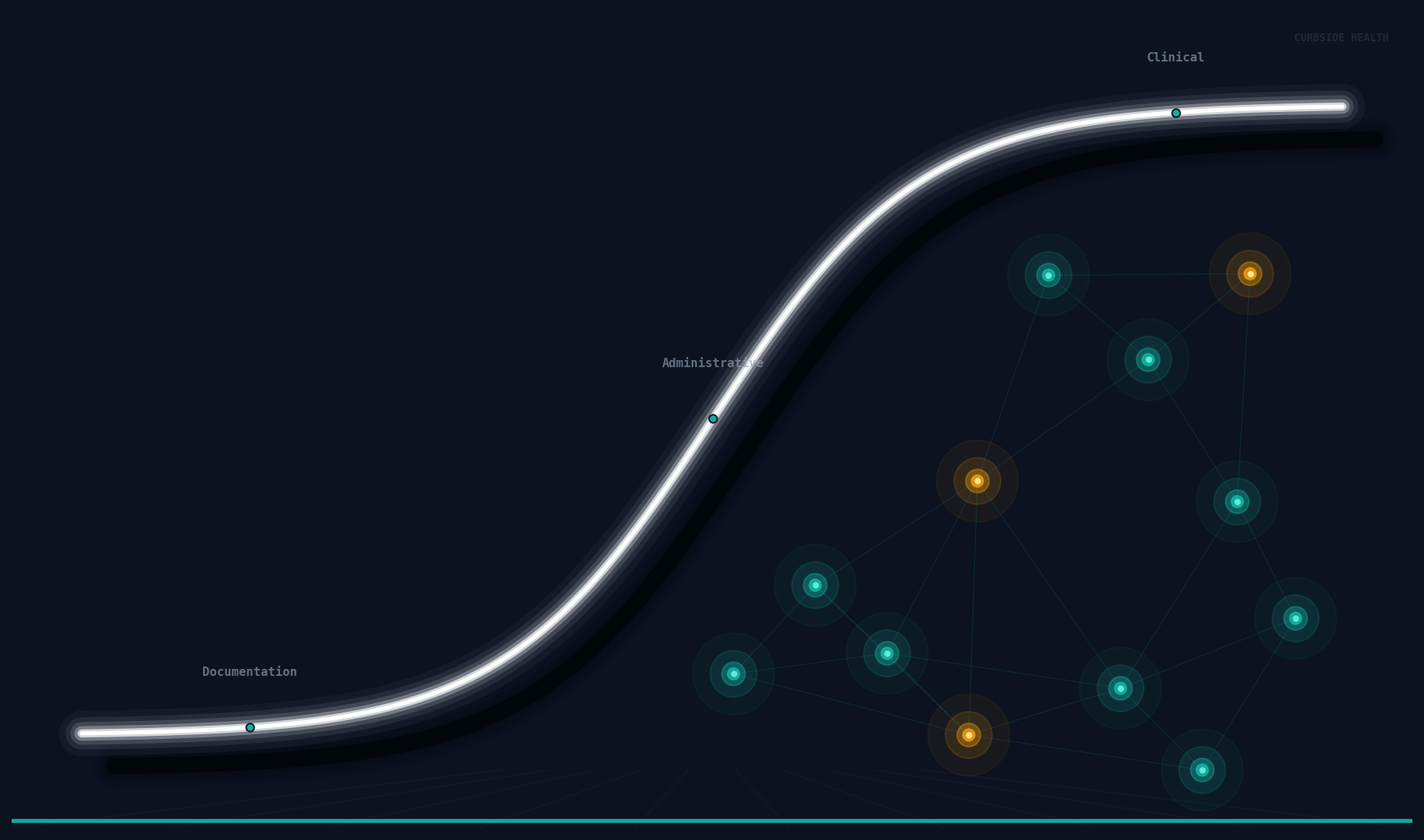

The broader AI adoption data tells a coherent story about the sequence in which AI enters healthcare organizations — and it maps almost precisely onto institutional risk tolerance at each stage.

The first wave was documentation. Ambient clinical AI — tools that listen to a patient encounter and generate the note — became the most universally adopted AI use case in medicine, with virtually all major health systems reporting adoption activity and more than half reporting high success. The entry point is low-stakes: the AI isn't making clinical decisions, it's writing the note afterward. Physician burnout is severe enough to override most institutional caution when the value is that immediate and that visible.

The second wave is administrative. Billing automation has grown from 36% to 61% of health systems in two years. Scheduling AI from 51% to 67%. These tools operate where errors are recoverable, the feedback loop is fast, and the ROI is legible in ways finance departments understand. They are crossing the chasm from early adopters to early majority right now.

The third wave — clinical decision support, risk stratification, treatment recommendation — is where the adoption curve starts to look different. Imaging and radiology AI has achieved broad deployment (90% of organizations report at least partial adoption), but it's the most interpretable and radiologist-adjacent clinical AI use case. Move into sepsis prediction, empiric antibiotic selection, clinical risk scoring: only 38% of organizations report high success, despite significant investment. Broad deployment. Limited success. That gap requires explanation.

Crossing the Chasm — Into the Clinic

Geoffrey Moore's crossing the chasm describes a specific discontinuity in technology adoption: the gap between early adopters, who embrace new technology on the strength of its promise, and the pragmatic early majority, who require demonstrated performance in their specific context before they commit.

Healthcare has crossed the chasm on documentation AI. It's mid-chasm on administrative AI. It is standing at the edge of the clinical chasm — the one that will be hardest to cross, for reasons that are structural rather than technological.

Clinical AI requires something documentation and administrative AI do not: clinician trust that the recommendation is right for this patient, at this institution, based on this institution's own standards. A documentation AI that produces a suboptimal note can be corrected in thirty seconds. A clinical AI that recommends the wrong antibiotic — or the right antibiotic for a different institution's antibiogram — produces harm that isn't always caught in real time.

This is why the data shows the split: broad deployment, limited success. Organizations are acquiring the technology. They are not yet building the governance infrastructure that makes the technology trustworthy in clinical contexts. The chasm isn't about the algorithm. It's about what sits underneath the algorithm: a validated, locally specific, maintained foundation of clinical standards that gives the AI recommendation actual institutional authority.

Shadow AI in clinical settings is the most visible symptom of this gap. When a physician uses unauthorized AI for a clinical decision — as 10% already report doing — they've made a unilateral judgment that the unapproved tool's output is reliable enough to act on. Sometimes that judgment is correct. The problem is that it's ungoverned: no local validation, no institutional accountability for the output, no mechanism to learn from outcomes when the AI is wrong.

What Governance Makes Possible

The institutions that will successfully cross the clinical chasm are not the ones that respond to shadow AI with stricter enforcement. Policy doesn't reduce demand — it drives usage further underground, making it less visible and therefore harder to govern.

The institutions that will lead are the ones that close the gap between what clinicians access informally (capable, fast, general-purpose AI) and what they can access through institutional channels (locally validated, governed, accountable AI that carries the authority of the medical staff behind it).

That requires governance infrastructure: a maintained library of clinical standards, locally adapted and version-controlled, that serves as the validation layer for AI recommendations. When the AI recommends an antibiotic, the clinician's question — conscious or not — is whether this reflects their institution's considered judgment. If the answer is clearly yes, adoption follows. If the answer is uncertain, the recommendation gets clicked past in the same gesture as every other EHR alert.

The good news embedded in the shadow AI data is that demand is established. Clinicians want these tools. They're using them without permission because the approved alternatives don't meet the need. The institutional challenge is not to create demand — it's to channel existing demand into governed pathways that protect patients and build the trust infrastructure clinical AI requires.

The adoption arc is moving. The question for health system leadership is whether the governance architecture is moving with it.

Curbside Health builds clinical content governance infrastructure — the layer that makes AI recommendations locally valid, clinician-trusted, and institutionally accountable.